This page is still under construction!

Table of Contents

Summary

The Asset

This asset utilizes the reinforcement learning algorithm from the Unity ML-Agents toolkit to generate high-quality 2D world maps using tiles. It works in two stages: Training and Inference. The Inference scene can be used by game developers who do not want to train their own agents, but would still like to use pre-trained agents. Training can be performed using the training scene of this asset. See Documentation below for details on the structure of the asset (and how to perform Training/Inference), and Demo Game for an example of a live game that utilizes Inference. In the Whitepapers section, you can find links to research performed in this area (and the inspiration for this project).

Why Should we Care about RL-PCG?

Traditional PCG often requires layering many algorithms on top of one-another until a suitable end result is reached. RL-PCG on the other hand, places the burden of resources on the developer during the training phase. This means that you can achieve substantial performance increases for the player by using a neural network instead.

Need some Background Reading?

If you are not familiar with the Unity engine, I recommend the following readings:

If you are not familiar with Machine Learning (or some of the terminology I use), I recommend the following readings to get started:

Documentation

Table of Contents

Installation

If you are interested in development using this project:

- Unity Game Engine (2018.4 or later)

- Create a New Project

- Use the Package Manager within Unity Game Engine to install the ML-Agents package

- Python (3.6.1 or higher)

- Install the ML-Agents python package using this command:

pip3 install mlagents

If you are interested in playing the demo game, see the demo game section.

Supporting Codebase

Features:

- Generating the 2D World Grid

- Assets/Editor/World_Generator_Editor_Window.cs (Do not remove from Editor Folder)

- The Tile that is Instanted at each Point in the Grid

- Assets/Resources/2D_World_Gen_Prefabs/Tile_Parent.prefab

- Tile Properties – How values like elevation and moisture can be used to determine the tile (See Training section).

- Performance Enhancements – Necessary to make game as efficient at runtime as possible.

- Scripts:

- Assets/2D_World_RLPCG/Scripts/Performance_Enhancement/Render_When_Visible.cs

- Scripts:

- Camera – Feel free to adjust view/add your own visual effects.

- Scripts:

- Assets/2D_World_RLPCG/Scripts/User_Interface/Camera_Movement.cs

- Scripts:

- Modeling – Low poly assets and sprites are best for this method of PCG, to reduce performance impacts.

- Materials

- Assets/2D_World_RLPCG/Materials

Directory Structure:

Note that Assets, Editor, and Resources are special folder names. You should not remove the scripts/objects within Editor or Resources without knowing what you are doing, or you will break the asset.

The following are folders involved with this project:

- Assets/2D_World_RLPCG:

- Materials – Stores the materials used to color the low-poly models.

- Barren, Bushes, Mountains, etc. folders are used to store the materials that correspond to different models stored in Objects.

- Objects – Stores the low-poly .FBX models; you can edit these using the tool called Blender.

- Scenes – Stores the Unity Scenes.

- 2D_World_RLPCG_Training – Scene used for training the RLPCG Agents.

- (TODO) 2D_World_RLPCG_Inference – Scene used for generating worlds using RLPCG Agents.

- Scripts – Stores the C# scripts that support the project.

- Metadata – Scripts that are used for data management/support for reinforcement learning.

- User_Interface – Scripts that are used for the user interface (GUI).

- Shaders – Stores the third-party open source Shaders used in the project. Read this page for more on Shaders.

- low-poly-water – Used for the low-poly water plane. Third party open source Shader, you can find the author here:

- polygon-wind-master – Used to give tree’s and grass their swaying motion. Third party open source Shader, you can find the author here:

- Materials – Stores the materials used to color the low-poly models.

- Assets/Editor – Holds scripts specific to the World_Generator_Editor_Window. (Special Folder)

- Assets/Resources – Holds prefabs that are used to instantiate the 2D World grid. (Special folder)

Training

This section covers how to setup Unity ML-Agents for this project. I’ve setup a training scene in this project that you can use as a baseline.

- Training on Multiple World Grids – Using varying sizes in grids is always a good practice to generalize training.

- Hyperparameters

- Tile Properties, and how they fit into Training – Elevation, moisture, etc.

How Training Works

The key to RL is the reward (or utility) function.

For this project, I am using concept/selector networks to greatly simplify each individual neural networks I/O and reward function.

Tips on Reinforcement Learning

The following are general RL guidelines for successful projects.

- Examine the Reward Function before you begin training: Build tools that let you view how the model behaves before you being training. In the case of this project, that could include being able to click on a tile and view the rewards/punishments/etc. an RL Agent would receive for different decisions.

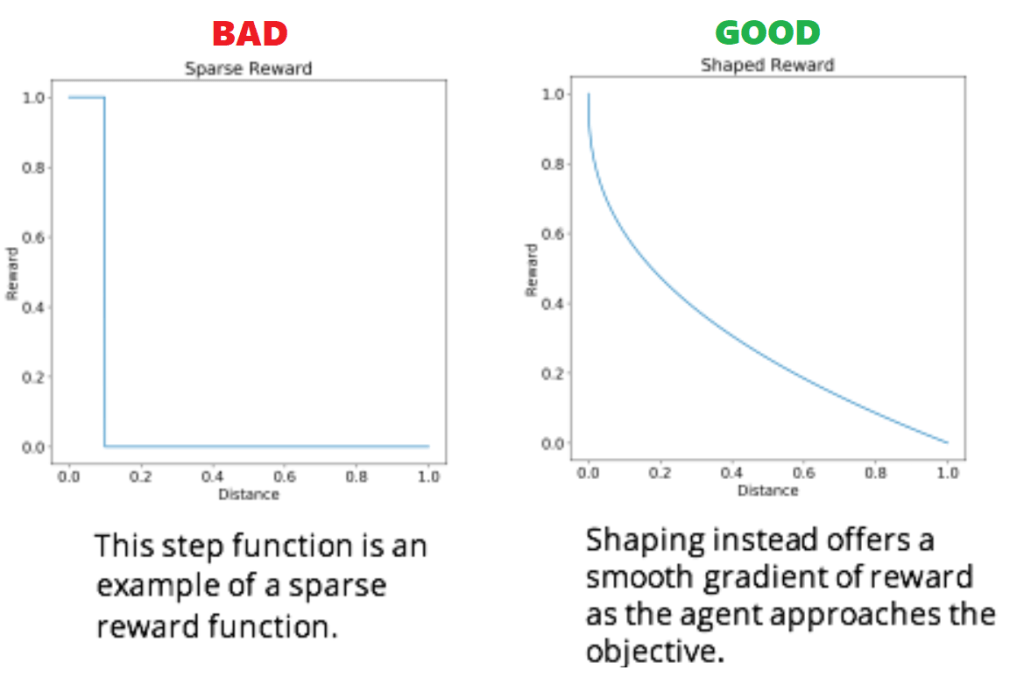

- Reward Function should provide Gradual Feedback: This is called reward shaping. If rewards are too sparse, the RL Agent will never (or at least, not for a very long time) reach an ideal training point. A gradient of reward dramatically increases training speed.

- Positive Rewards:

- Encourages agents to continue the task to get the reward.

- Avoid end states unless they provide a very large reward.

- Negative Rewards:

- Encourages agents to complete a task as quickly as possible, if there is a punishment for each step. Punishments per step can encourage an RL Agent to find the ‘quickest’ path forward.

Inference

This section covers actually deploying a game with RL-PCG. I’ve setup an inference scene in this project that you can use as a baseline.

- Barracuda – Unity’s Runtime inference Engine.

- Performance – The biggest hit is memory, i.e. loading the scene initially; once it’s loaded, inference is extremely fast.

- Setting up a World Generator – The inference scene in this projec

Whitepapers

This section includes whitepapers that support RL-PCG in video games, and are inspirational sources for this project.

- Khalifa, A., Bontrager, P., Earle, S., & Togelius, J. (2020). Pcgrl: Procedural content generation via reinforcement learning. arXiv preprint arXiv:2001.09212.

- Cobbe, K., Hesse, C., Hilton, J., & Schulman, J. (2019). Leveraging procedural generation to benchmark reinforcement learning. arXiv preprint arXiv:1912.01588.

- Shaker, N., Togelius, J., & Nelson, M. J. (2016). Procedural content generation in games. Switzerland: Springer International Publishing.

- Perez-Liebana, D., Liu, J., Khalifa, A., Gaina, R. D., Togelius, J., & Lucas, S. M. (2019). General video game ai: A multitrack framework for evaluating agents, games, and content generation algorithms. IEEE Transactions on Games, 11(3), 195-214.

- Summerville, A., Snodgrass, S., Guzdial, M., Holmgård, C., Hoover, A. K., Isaksen, A., … & Togelius, J. (2018). Procedural content generation via machine learning (PCGML). IEEE Transactions on Games, 10(3), 257-270.

- Gudimella, A., Story, R., Shaker, M., Kong, R., Brown, M., Shnayder, V., & Campos, M. (2017). Deep reinforcement learning for dexterous manipulation with concept networks. arXiv preprint arXiv:1709.06977.

Demo Game

Coming roughly in November-December!